Accelerating Al to power the future of Intelligence

NVIDIA BLACKWELL B200-SXM6

As Seen On

Our Solutions

Choose the perfect infrastructure for your AI workloads,Start Now >

On-Demand Cloud GPUs

Scale instantly with dedicated full-machine GPU rentals—no long-term commitments.

Reserved Cluster

Dedicated GPU clusters available for rent in Bare Metal or Cloud deployment.

Fine-tuning Jobs

Optimize AI models with your data through expert fine-tuning services.

Trusted by 10,000+

Researchers, AI Startup Teams, Engineers

and AI Endeavors across the world.

HPC-AI's Core infrastructure Colossal-AI already powers global leading enterprises.

Pricing Comparison

See how our pricing stacks up against the competition.Learn More >

GPU Type | HPC-AI.COM | AWS | Lambda | RunPod | CoreWeave |

|---|

Scale Your AI Workload with the Right Plan

Unlock premium GPUs with additional credits every month.

More questions to our membership plan? FAQs

Why Choose HPC-AI.COM

We deliver enterprise-grade AI infrastructure for teams that demand both performance and cost-efficiency.

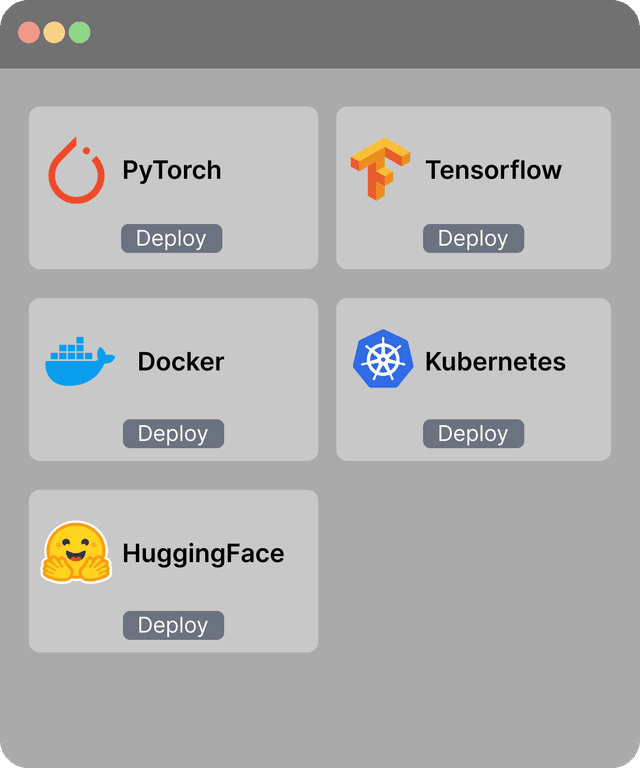

AI-Optimized Stack

Pre-configured AI pipeline for rapid deployment & real-time inference.

Top Performance

High-performance AI infrastructure engineered to deliver exceptional IOPS, ultra-low latency, and massive throughput for most demanding AI workloads.

99% Uptime

Stability and Security Guaranteed. Run your job with confidence and we back you up.

Powerful APIs

Scale and automate your AI jobs and manage your GPU resources effortlessly.

Ready to accelerate your AI projects?

Contact our team to discuss your team's specific requirements.