We Tested 3 LLM APIs And The Results Might Surprise You

Choosing the right LLM API is no longer just about model intelligence but about performance in production.

For modern AI applications, three metrics matter most:

-

Latency (TTFT) → how fast users get the first response

-

Throughput (tokens per second) → how well the system scales

-

Success rate → how reliably the system performs

In this article, we take a closer look at three leading models:

-

MiniMax M2.5

-

Kimi K2.5

-

GLM 5.1

and break down what their real-world performance means for your applications.

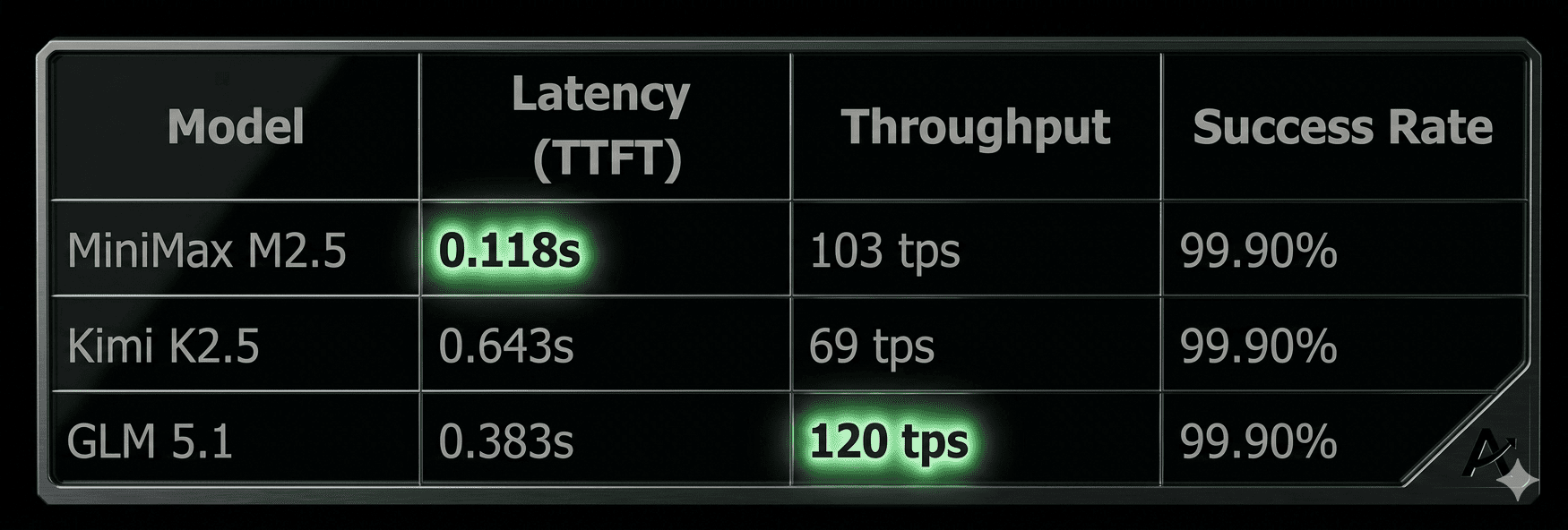

Performance Overview

Key Performance Insights

1. Ultra-Low Latency for Real-Time Applications

With a Time to First Token (TTFT) of just 0.118 seconds, MiniMax M2.5 delivers responses almost instantly. In practice, this means users begin seeing output nearly the moment they submit a request.

This level of responsiveness is critical for:

-

Chatbots and conversational AI, where delays can break the flow of conversation

-

AI copilots, where users expect immediate assistance while coding or writing

-

Real-time interfaces, such as live search, autocomplete, or interactive tools

What makes low latency so impactful is not just speed, it’s perceived performance. Even small delays (300–500ms) can make an application feel sluggish or unresponsive.

At 0.118s, MiniMax M2.5 operates well below that threshold, enabling:

-

Smoother interactions

-

Higher user engagement

-

More natural, human-like experiences

In short, lower latency doesn’t just improve speed but transforms how users experience your product.

2. High Throughput for Scalable Systems

When it comes to handling scale, throughput becomes the defining factor.

GLM 5.1 leads in this category with 120 tokens per second, followed closely by MiniMax M2.5 at 103 tps. This determines how quickly a model can generate content once a response has started.

High throughput is especially important for:

-

High-concurrency applications serving many users simultaneously

-

Batch processing workloads such as document generation or data transformation

-

Content pipelines, where large volumes of text are generated continuously

The impact of higher throughput includes:

-

Faster completion of long responses

-

Reduced queuing under heavy load

-

More efficient use of infrastructure

Simply put, throughput determines how well your system scales under pressure.

3. Balanced Performance for Flexible Use Cases

Kimi K2.5 presents a more balanced performance profile, with a latency of 0.643 seconds and throughput of 69 tokens per second.

While it doesn’t lead in raw speed or scale, this positioning can be advantageous depending on the use case.

It is well-suited for:

-

General-purpose applications that don’t require ultra-fast response times

-

Workflows with moderate concurrency, where extreme throughput isn’t necessary

-

Use cases where consistency and stability are prioritized over peak performance

In many real-world scenarios, not every application needs the fastest or most scalable model. Instead, developers often look for:

-

Predictable performance

-

Stable behavior across different workloads

-

A balance between responsiveness and resource usage

Kimi K2.5 fits naturally into this category, offering a reliable option for teams that value consistency over specialization.

The Hidden Advantage: 99.9% Reliability Across All Models

One of the most important, yet often overlooked, metric is success rate. And all three models deliver 99.9% success rate.

This means:

-

Stable performance at scale

-

Minimal request failures

-

Consistent behavior in production

99.9% is already production-grade. Since it is built for scale, You can grow without worrying about constant failures. With a smooth and reliable user experience, this translates into better user satisfaction and retention. Higher reliability also means less engineering overhead, so your team can focus on building features instead of fixing issues. In real-world systems, this level of reliability is what keeps applications running smoothly.

Choosing the Right Model for Your Use Case

Different applications prioritize different performance characteristics:

For Real-Time Experiences:

Choose MiniMax M2.5

-

Lowest latency (0.118s)

-

Fast user interactions

For High-Scale Workloads:

Choose GLM 5.1

-

Highest throughput (120 tps)

-

Handles large volumes efficiently

For Balanced Performance:

Choose Kimi K2.5

-

Stable, consistent

-

Suitable for general use cases

Why These Metrics Matter in Production

In real-world AI systems:

-

Latency impacts user experience

-

Throughput impacts scalability

-

Success rate impacts reliability

When combined, they determine whether your application:

-

Feels fast

-

Scales effectively

-

Runs without disruption

The best systems are not just powerful but also fast, scalable, and reliable. These models are built for Modern AI Applications. With performance profiles like these, developers can: deploy AI applications with confidence, optimize for specific use cases, and scale without compromising stability. Regardless of what you are building, having access to multiple high-performing models gives you flexibility and control.